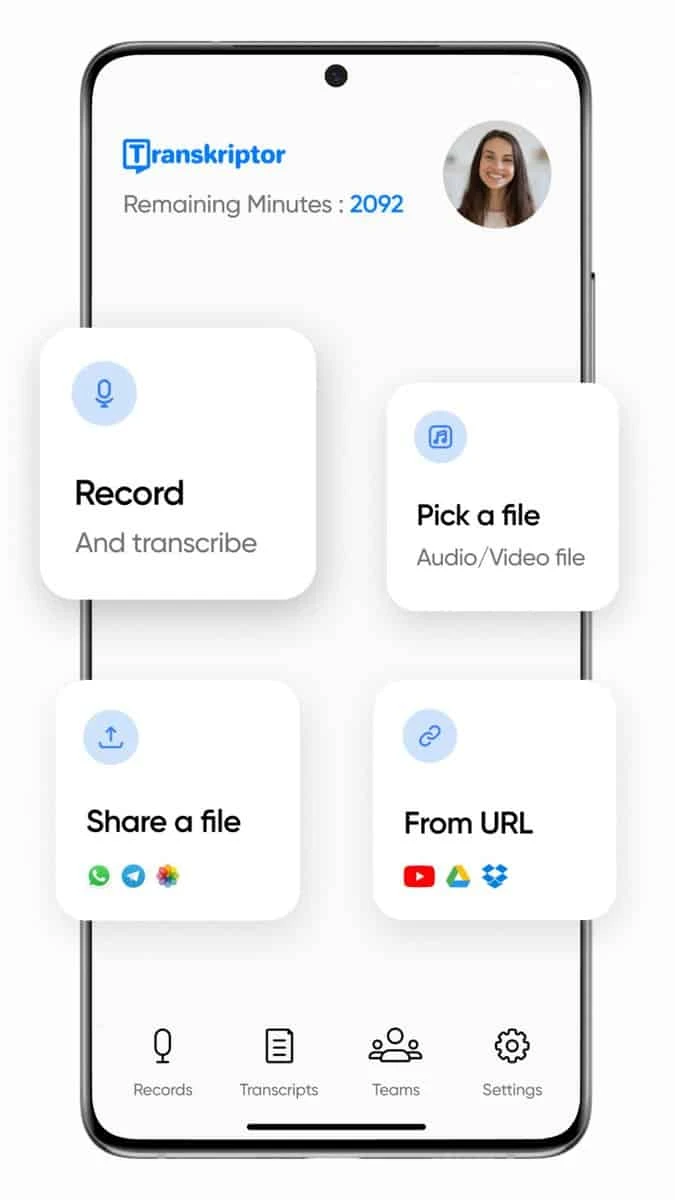

How to Transcribe Videos with Transkriptor?

Transkriptor allows you to create your audio-to-text video transcription the way you want with ease. Just a few clicks will turn your audio into text!

Upload your Video.

We support a wide variety of formats. But if you have any file that has a rare and unique format, you should convert it to something more common like mp3, mp4 or wav.

Leave the Transcription to Us.

Transkriptor will automatically Transcribe your video file within minutes. When your order is done, you will receive an email informing that your text is ready.

Edit and Export your Text

Login to your account and list completed tasks. Finally, download or share the Transcription files.

Why is Automatic Video Transcription is Important?

According to the research, videos are the most popular way of communication among people of all age groups. Circa 2017, around two-thirds of the adults in America own a smartphone. That is why transcribing video content is a hot topic. People won’t just watch a video without understanding its content. A transcript will allow users to follow and review the speech in the video. Students and professionals are using it for a very long time. These millennials use it for making school works easier or at the corporate level mainly for note-taking purposes. Especially in contexts when audio is not available, like during teleconferencing or meeting where you can. But it doesn’t stop there. Transcribing videos has a plethora of use cases. Whatever business you have you might also end up using it.

Transcribe Video to Boost Online Popularity

Making sure your video content is accessible to a range of people is mandatory if you want to maximize viewership. This includes adding captions, organizing subtitles, and ensuring your audio is clear when you transcribe YouTube videos . This means translating every sentence in your script into captions, organizing the text on-screen via subtitles, or scrolling contents. Even make sure your voice and any sounds are clear and easy on the ears when you transcribe the video .

Good video content creators find ways for all types of audiences to be able to understand the content. Also, they have it be beautiful that those who are hearing impaired can enjoy your video without difficulty.

How is Video Transcription Used?

Initially, videos targeted around 3% more people than text. But by adding captions, we can reach as many people as possible. Captions are created when there’s a need and are meant for any hearing or reading impairment.You should avoid settings that make it harder to see a speaker like excessive camera movement or poor lighting. You should also avoid background noises that distract from the voice of the person speaking.

Another thing to avoid is any flashing content because this can trigger seizures in those who have photosensitive epilepsy. Having captions feature on your video will also help. Since these have an audience beyond deaf and hard-of-hearing audiences. In recent times, videos for news and teaching purposes have increased. You need to make sure that your audience can access the content and understand the message delivered clearly.

What Should You Take into Account When You Transcribe Video?

Captions in videos are important for people who are deaf and also those who might miss some of your messages. Because they’ve turned off their sound while still viewing the video to save battery life or as someone may be viewing your video on a smaller device with ease hearing ability.Your captions help them understand what’s going on in the video even if they have an unfinished sound which makes videos more accessible. In this day and age captions are necessary and make it possible for everyone to enjoy media no matter what platform they’re watching in.

It’s important that the content on the video is transcribed at a pace where it is clear it gets the message across. Subtitles should be of high quality, with bright colors and contrast that make them easy to read and helps them stand out against darker sections of the video.

A video without a transcript is incomplete. It could be entertaining to watch, especially if the video includes a voiceover performer, but it can’t actually meet the accessibility guidelines set out by law.

Subtitles and transcripts are essential pieces for providing engaging videos for people with hearing and visual impairments or other disabilities. Video publishers also want to make their content more accessible. Because when viewers who need captions or transcripts are able to follow the content, they’re more likely to become loyal viewers of your channel.

Transkriptor can help you boost your business and view count by transcribing your videos or help you succeed in your exams. Take advantage of affordable and reliable services today!

Write things on the go.

See What Our Customers Have Said About Us!

We serve thousands of people from any age, profession and country. Click on the comments or the button below to read more honest reviews about us.

Everything is very good, it is not expensive, good relation between price and quality, and it is also quite fast. Great precision in relation to the times of the subtitles and in the recognition of the words. Very few corrections had to be made.

What I liked most about Transkriptor is how it has a high accuracy. With an easy-to-use platform, I only needed to make punctuation adjustments.